· Dana Moochnick · Deep Dive · 6 min read

Beyond behavior: How machine learning decodes consciousness and forecasts seizures from brain activity

Reflections on a seminar exploring how AI and brain network analysis can transform patient care

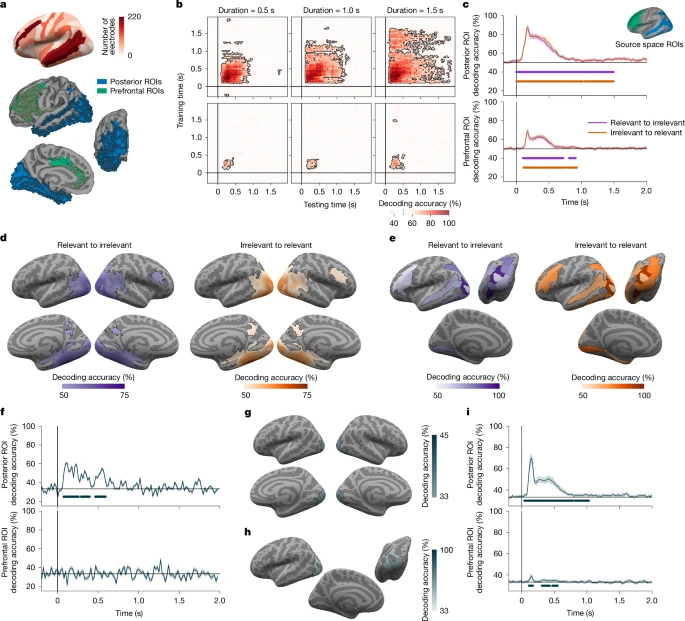

Image credit: Source: Nature (2025). Adversarial testing of global neuronal workspace and integrated information theories of consciousness.

There is something genuinely exciting about starting a new semester by being reminded how much we still do not know, and how many people are actively working to figure it out.

At the first Biomedical Engineering Seminar of the spring semester at UMass Amherst’s Riccio College of Engineering, Aya Khalaf, PhD, from the Yale School of Medicine presented Decoding Brain Networks for Improved Patient Quality of Life. From the beginning, it was clear this seminar would be one of those talks that stays with me long after I left the room. As someone with a background in both wet lab neuroscience research and computational modeling, seminars like this reinforce how essential computational tools and AI have become for transforming complex neural data into insights that can meaningfully impact patient care.

Studying Consciousness Beyond Behavior

At its core, the seminar focused on consciousness. What it is, how we detect it, and how it can be disrupted in neurological disease. Consciousness feels intuitive when we experience it ourselves, yet scientifically it remains one of the biggest open questions in neuroscience, with major implications for medicine, ethics, and patient care. This is a topic I am personally very interested in, especially because in the past I learned about coma states and how patients can still retain forms of consciousness even when they appear behaviorally unresponsive.

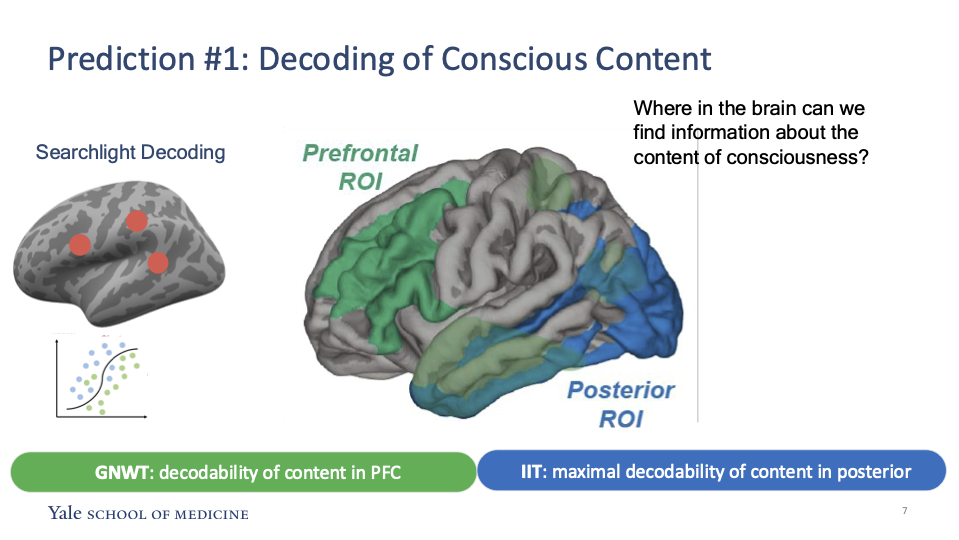

Dr. Khalaf’s work combines computational modeling, AI, electrophysiology, and neuroimaging to study how conscious perception emerges from coordinated activity across brain networks. One key idea explored was decoding the content of consciousness, not just determining whether someone is conscious at all. Rather than asking a simple yes or no question about awareness, this approach focuses on identifying what a person is actually perceiving. For example, neural activity patterns in specific brain regions can be used to distinguish whether someone is seeing a face versus an object, offering a more nuanced view of conscious experience.

Decoding conscious content using distributed brain networks across prefrontal and posterior regions (Khalaf et al. 2025).

Decoding conscious content using distributed brain networks across prefrontal and posterior regions (Khalaf et al. 2025).

Seeing Consciousness Hidden in Brain Signals

One moment that really stuck with me was when Dr. Khalaf discussed patients diagnosed with unresponsive wakefulness syndrome. When asked to imagine movement, patients appear behaviorally unresponsive, but neuroimaging can reveal patterns of brain activity similar to those seen in minimally conscious individuals . This highlights how behavioral tests alone may not fully capture internal awareness.

From a technical perspective, the work isolates neural signals associated with conscious perception, meaning when a stimulus is actively experienced and reportable by the individual (for example, intentionally imagining a movement). This is distinct from general stimulus processing, which refers to automatic neural responses that can occur even when a stimulus is not consciously perceived (for example, blinking without thinking about it). To study this difference, researchers examined stimulus detection networks using intracranial EEG (electroencephalogram) recordings with thousands of implanted electrodes. In this context, target stimuli were those that participants were instructed to attend to or respond to, while non-target stimuli were presented but did not require conscious attention or action. Because both types of stimuli activate the brain at some level, careful signal isolation is needed to identify neural activity that truly reflects conscious awareness rather than passive sensory processing.

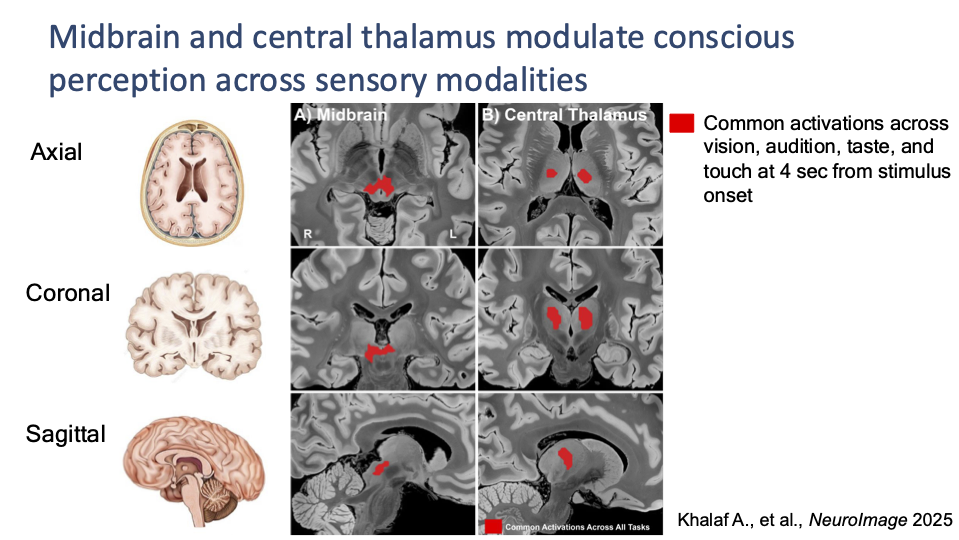

The functional MRI (fMRI) analyses stood out to me because they showed how brain imaging can be used to study not just where activity occurs, but how conscious experience is coordinated across networks. By tracking changes in blood oxygen level dependent (BOLD) signals, researchers found that as participants shifted from situations where they rested and focused on a static point (fixation blocks) to circumstances where they actively processed sensory information (task blocks), they could observe how brain activity changed with conscious engagement. Using mathematical pattern recognition methods to identify groups of brain regions that consistently activated together, the study highlighted the midbrain and central thalamus as key hubs that amplify and coordinate signals across vision, hearing, and touch. While these regions have long been linked to consciousness, identifying them through data driven network analysis helped validate the approach and reinforced the idea that consciousness emerges from distributed brain systems rather than isolated regions.

Midbrain and central thalamus involvement in conscious perception across sensory modalities (Khalaf et al. 2025).

Midbrain and central thalamus involvement in conscious perception across sensory modalities (Khalaf et al. 2025).

Using Machine Learning to Predict Loss of Consciousness Before a Seizure

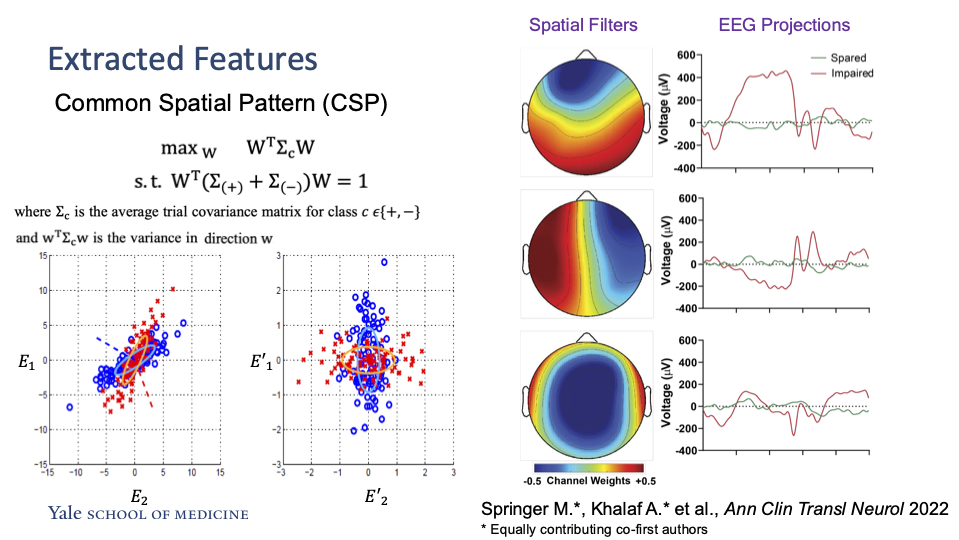

The part of the talk that stood out to me the most focused on epilepsy and the use of machine learning to understand how seizures affect consciousness. Dr. Khalaf discussed absence seizures, a type of seizure in which patients may briefly lose awareness or responsiveness, often without obvious physical convulsions. Because these seizures can be subtle and difficult to detect behaviorally, understanding their neural signatures is especially important. Using EEG data, Dr. Khalaf presented machine learning models trained to predict whether an absence seizure would impair consciousness by comparing brain activity before a seizure begins and during the seizure itself. The models revealed clear differences in neural activity patterns between these two brain states, showing that measurable changes in network behavior emerge even before consciousness is disrupted. One key insight was that healthier brain networks tend to produce more complex neural signals, while impaired consciousness is associated with reduced signal complexity.

Technically, this approach involved extracting informative features from EEG signals that highlight differences between the brain states before and during a seizure. By applying Common Spatial Pattern analysis, the models emphasized spatial patterns of neural activity that best distinguished seizures that impaired consciousness from those that did not. Since consciousness during seizures is typically assessed through behavior alone, identifying reliable neural markers could eventually help clinicians anticipate loss of awareness and support earlier or more targeted interventions for patients. I am particularly interested in the machine learning side of this work because AI has the potential to support clinical decision making while also improving cost effectiveness. If we can rely on robust ML models to predict impaired consciousness from EEG data, it could reduce the need for extensive behavioral testing and long monitoring sessions, making epilepsy evaluation more accessible and scalable in real clinical settings.

Extracting EEG features using Common Spatial Pattern analysis to distinguish impaired and spared consciousness during seizures (Springer, Khalaf, et al. 2022).

Extracting EEG features using Common Spatial Pattern analysis to distinguish impaired and spared consciousness during seizures (Springer, Khalaf, et al. 2022).

Looking Ahead

This semester, I want to be intentional about attending seminars and writing about what I learn. Research evolves quickly, and staying curious and engaged with work happening now feels like an important part of being a student in science and engineering.

Today was a strong start.